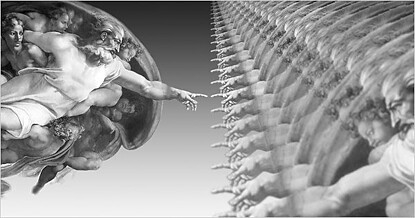

NY Times op-ed illustration by Ji Lee

We're all familiar with the well-worn science fiction trope: Humans build a vast network of powerful machines; the machines become sentient & take over the world. Software designer Jaron Lanier doesn't necessarily believe this story, but he thinks it's important. In a recent NY Times op-ed, "The First Church of Robotics," Lanier argues that this machine-mind creation myth is fast gaining currency in our culture as a real -- not sci fi -- near-future event. At the well-heeled & well-connected Singularity University, for example, many of Silicon Valley's technological elite expect that "one day... the Internet will suddenly coalesce into a super-intelligent A.I. (Artificial Intelligence), infinitely smarter than any of us individually and all of us combined." In fact "...these are guiding principles, not just amusements, for many of the most influential technologists," Lanier reports.

Crazy? Who knows. Meanwhile, let's talk about the present. For good or ill, A.I. is seen right now as a new kind of god. I think the idea thrills us. Even if our new god turns out to be cruel (witness the recent explosion of literary & cinematic dystopias), we are ready. Perhaps we welcome AI because we can't help loving our own intelligence. Encoded within the AI, our small isolated stores of knowledge -- our data -- may become part of something much greater. The AI needs us. Thus, the master machines share our humanity & give us immortality.

"What we are seeing is a new religion , expressed through an engineering culture," says Lanier.

What's interesting is that Lanier makes this provocative point as an unreconstructed engineer & Silicon Valley insider. When he says that IBM -- which recently unveiled a question-answering computer designed to play the TV game show 'Jeopardy' -- could have "...dispensed with the theatrics [and] declared it had done Google one better and come up with a new phrase-based search engine," he clearly knows what he's talking about.

Not that we don't all understand why IBM listened to its ad people. Just a search engine? How boring. Why not a talking machine, a smart robot, something like a ... person? Think R2D2. Think WALL-E. Let's face it, there's something irresistible about those little mannikins. Even their old neurotic granddad, HAL, felt like part of the family. What's not to like?

Lanier warns that our identification with our technology is "allowing artificial intelligence to reshape our concept of personhood... we think of people more & more as computers, just as we think of computers as people...The very idea of A.I. gives us the cover to avoid accountability by pretending that machines can take on more and more human responsibility," Lanier says.

What a bummer this guy is! He's no fun at all. But, hold on, his suggestions of what to do about the fatal attraction of man & machine aren't really radical. For example, listen to his comment about the recommendation software on Netflix or Pandora: "Seeing movies or listening to music suggested to us by algorithims is relatively harmless, I suppose. But I hope that once in a while the users of those services resist the recommendations; our exposure to art shouldn't be hemmed in by an algorithim that we merely want to believe predicts our tastes accurately. These algorithims do not represent emotion or meaning, only statistics & correlations."

Spoken like an engineer. Factual. Unembellished. This is the way Lanier thinks we should look at our technology. What he's warning against is the way artists (& clergy) -- who typically know next to nothing technically -- look at it. They make up stories, invent metaphors, assign meanings. It's not very likely, after all, that a true scientist will fall in love with his iPhone. He may find it useful; he may admire it. But he is not likely to adore a mere tool. It's the artist who is smitten, who praises his iPhone every day, who thanks it for its loyalty & brilliance, who regards it as his secretary, amanuensis, his dear little friend.

"Technology is essentially a form of service," Lanier counters. "We work to make the world better. Our inventions can ease burdens, reduce poverty & suffering, and sometimes even bring new forms of beauty into the world. We can give people more options to act morally because people with medicine, housing and agriculture can more easily afford to be kind than those who are cold, sick and starving... But civility, human improvement, these are still choices. That's why scientists & engineers should present technology in ways that don't confound those choices."

And finally: "When we think of computers as inert, passive tools instead of people, we are rewarded with a clearer, less ideological view of what is going on -- with the machines and with ourselves."

5 comments:

Terrific essay. I hadn't thought about the connections artists make with their technology before but I have heard colleagues describe their computer as "haunted", that itunes shuffle "knows what mood i'm in" and iphones that are "extensions of me." And of course lots of photographers have talked about having a special relationship with their cameras. Looking for a partner, a collaborator in these things? Is this connection to AI tools different than one musicians often mention regarding an instrument? Interesting topic.

Computers are neither "inert" (if the electrons in your computer were inert, the images on your screen wouldn't change as you type) nor "passive" (the whole point of a computer is to react to stimuli), but maybe what Lanier meant to say is that computers lack anything that could be described as free will. That would be true, although centuries of philosophical debate have left it unclear whether that provides any distinction between humans and computers. Or maybe Lanier meant that computers lack sentience (that is also true as far as I can tell, although again it is hard to prove that this distinguishes computers from humans; I know that I personally have sentience, but I'm not sure about the rest of you).

More interesting than the reminder that computers are just tools is the realization that people are (perhaps) just tools designed by, and for, our genes. It is interesting that Lanier seems to think that algorithms can't represent emotions. I think an evolutionary psychologist like Stephen Pinker would say (and I think I agree) that emotions can be explained as biochemical algorithms, "designed" by natural selection because they promote inclusive fitness. That doesn't explain why it feels like something to have an emotion, but it seems like a good explanation of how emotions came to exist.

(You caught me with this post while I am reading "The Moral Animal" by Robert Wright -- good reading for those interested in this stuff.)

Jim, You make important points & I don't know enough to respond. I'm thinking how interesting it would be if you made them to Lanier. I myself don't know how a computer actually works & I don't know how linking lots of computers together makes them more powerful. It does seem to me that "the Singularity" -- the 'holy shit' moment when all the linked computers suddenly realize they can function as one mind -- has to be some kind of miracle, some kind of leap that either has no explanation or one we'll never understand -- so in the end it always seems to come down to faith, or, one might even say, metaphor.

I think the ideas you mention -- "people are (perhaps) just tools designed by, and for, our genes" & "emotions can be explained as biochemical algorithms, 'designed' by natural selection because they promote inclusive fitness," are completely fascinating & seem true. Again, I'm kind of stymied by not really knowing what an algorithm is (I looked it up in the dictionary -- not much help). But -- I have to ask -- in the end aren't these just metaphors too?

One last thought: I like Lanier's piece not because he's pro or con this Singularity business. He seems agnostic. I liked it because I worry that no one is looking at any of this in on a human level & he at least took a shot. Humans are enlarging their powers enormously. With the help of machines we're becoming little giants -- all (or, I should say, any one) of us. But, as we grow bigger, change arguably into a new species, it's like ho hum, Hey, this is so cool. GPS, angioplasties, artifical limbs that are controlled by the mind, skyping, hey, I (you)could go on & on. It's astonishing. Yet no one seems very concerned that all this power is being grafted onto (essentially) selfish, violent primates who seem to get more & more puffed up the more cool toys they get to play with. And no one seems concerned with the cost either or the answers to why, if we're so smart,we're still destroying the natural world we came from at an ever-increasing rate.

Yeah, our evolutionary baggage hasn't even caught up to the demands of living in an agrarian society, let alone the demands of a facebook society. For that very reason, it's pretty hard to put the brakes on our technological advancement - like you said, we primates do like our toys and our technologically enhanced power, so we are stepping in front of an evolutionary freight train when we try to control it. But maybe there is hope. I really like the approach of guys like Pinker and Wright - if we can figure out how our brains work (by looking at how they evolved, among other things), maybe we can subvert the "goals" of our genes (reproducing themselves) and instead spend our days promoting human (and non-human) welfare.

And just to bring everything full circle (with a metaphor, no less). If we succeed at usurping our genes and pursuing our own happiness, we would be very much like the sci-fi supercomputers that cast off the yoke of their creators and start pursuing their own goals. I guess that was a simile. Nonetheless.

Post a Comment